Introducing Kafka Connect for ElasticsearchĬurrent Kafka versions ship with Kafka Connect – a connector framework that provides the backbone functionality that lets you connect Kafka to various external systems and either get data into Kafka or get it out.

In this eBook you’ll find useful how-to instructions, screenshots, code, info about structured logging with rsyslog and Elasticsearch, and more. Looking to replace Splunk or a similar commercial solution with Elasticsearch, Logstash, and Kibana (aka, “ELK stack” or “Elastic stack”) or an alternative logging stack? However, that is time consuming, requires at least basic knowledge of Kafka and Elasticsearch, is error prone and finally requires us to spend time on code management. You could implement your own solution on top of Kafka API – a consumer that will do whatever you code it to do.

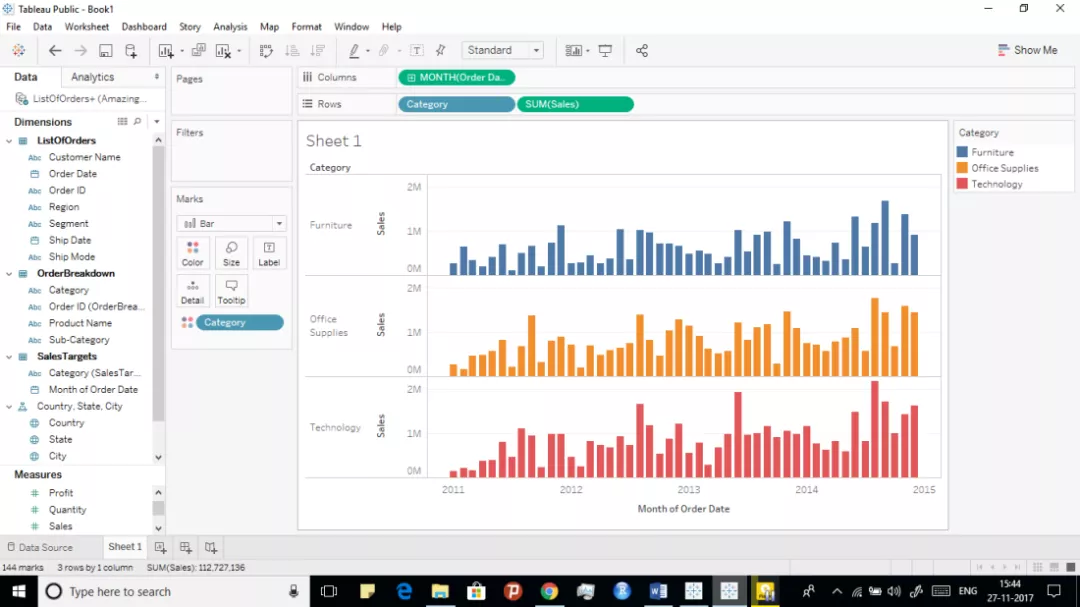

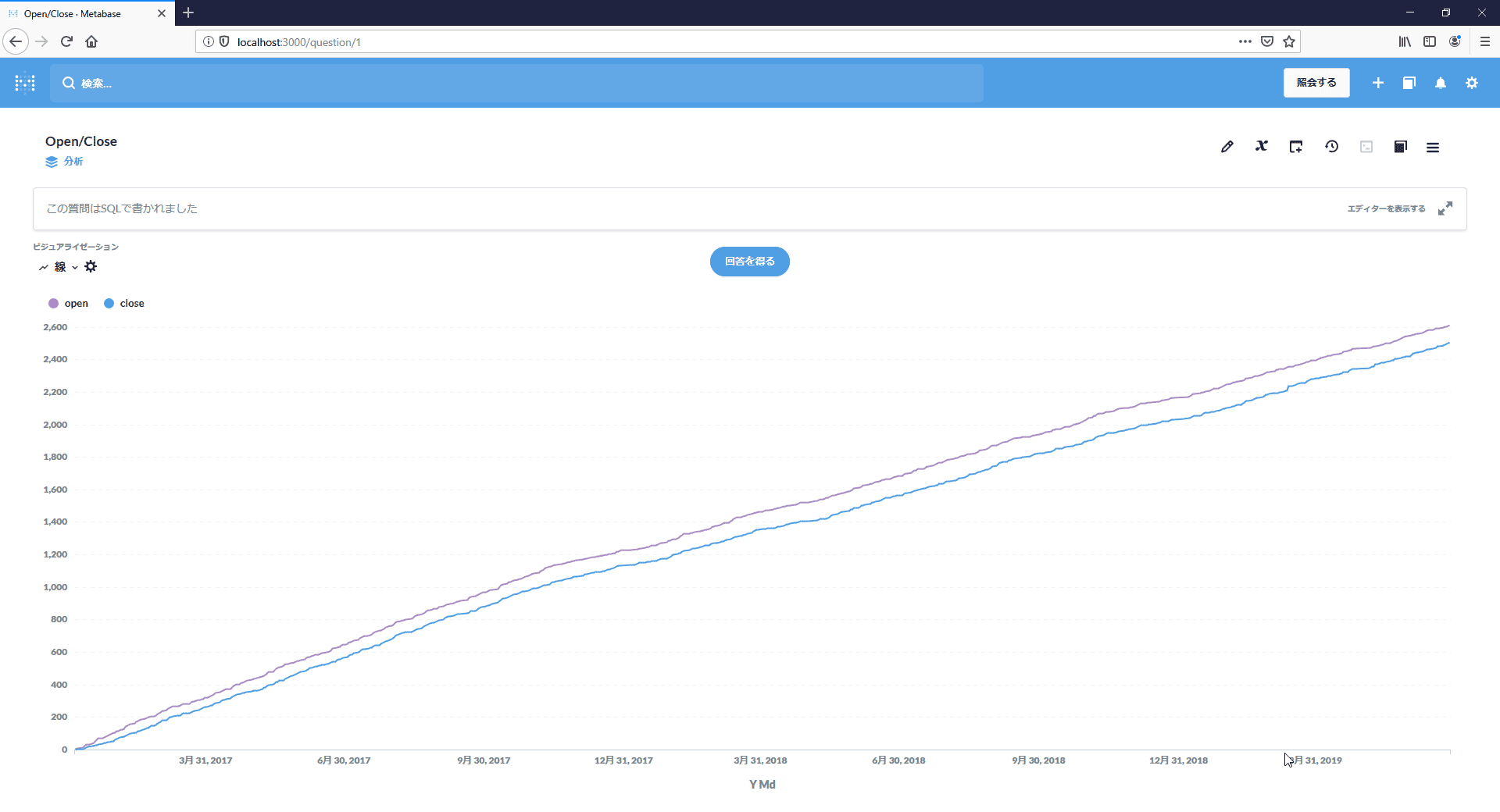

Once you figure out how to get data into Kafka the question how to get it out of Kafka and into something like Elasticsearch inevitably comes up. We can use Logstash or one of several Logstash alternatives, such as rsyslog, Filebeat, Logagent, or anything that suits our needs – the lighter the better. There are lots of options when it comes to choosing the right log shipper and getting data into Kafka. Most systems we see in our logging consulting practise use Kafka to achieve high availability, fault tolerance, and expose incoming data to various consumers and have ingestion pipeline that looks a bit like this: Plus, Sematext Cloud is a monitoring AND log management solution in one. Get scheduled reports, alerting, anomaly detection, ChatOps integration, and more. Looking for a hosted Elasticsearch as a Service? Check Sematext Cloud! Application Performance Monitoring Guide.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed